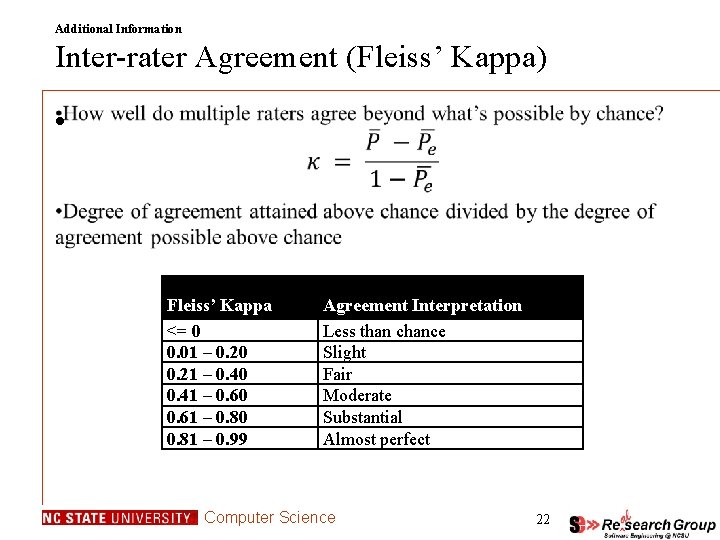

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

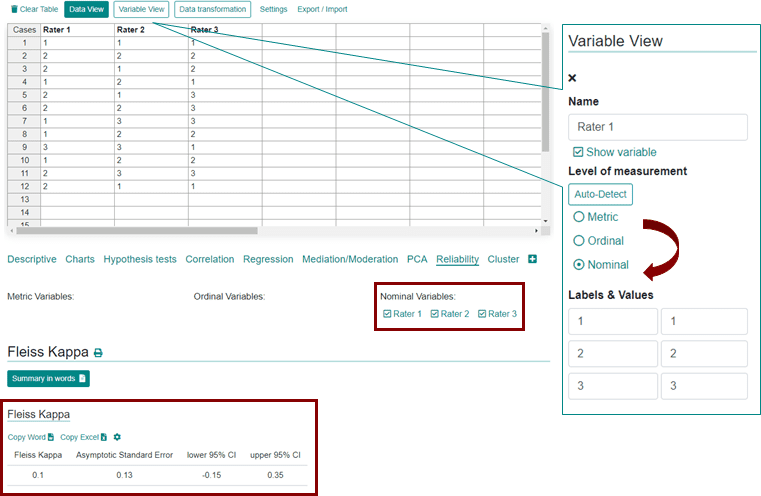

GitHub - efi/fleiss-kappa: A tiny, MIT-licensed java implementation of the "Fleiss Kappa" measure for the inter-rater reliability of categorical ratings represented as either int[][] or long[][]

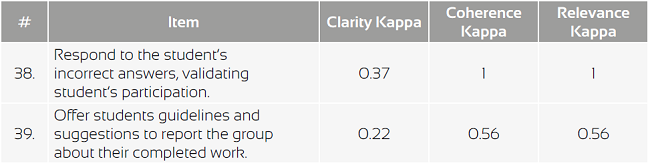

![PDF] Fuzzy Fleiss-kappa for Comparison of Fuzzy Classifiers | Semantic Scholar PDF] Fuzzy Fleiss-kappa for Comparison of Fuzzy Classifiers | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/8aa54d5299fb48d6a7355c766573ecb520a43393/5-Table3-1.png)